NVIDIA's Bold Bet: The Vera CPU Charges into the Server Arena

NVIDIA, a company synonymous with GPU dominance, is making its most direct assault yet on the lucrative, high-performance CPU market. The launch of the NVIDIA Vera CPU, an Arm-based processor now in full production as of Q1 2026, isn't just a new product; it's a strategic declaration of war against established giants like Intel Xeon, AMD EPYC, and even Amazon's own Graviton chips. Jensen Huang's ambition to transform NVIDIA into a premier CPU vendor is well-known, and the Vera CPU represents a cornerstone of that vision, pushing NVIDIA further into becoming an "all-encompassing computing ecosystem".

Named after the trailblazing American astronomer Vera Florence Cooper Rubin, this chip is purpose-built for the escalating demands of artificial intelligence. While we've seen NVIDIA's Grace CPU in tightly integrated Grace-Hopper Superchips before, the Vera CPU’s availability as a standalone offering signals a significant shift, allowing greater flexibility in data center and AI infrastructure design. It’s a move that immediately sent ripples through the market, with Intel and AMD seeing their shares dip upon the announcement.

Engineering for the AI-Native Data Center

At its core, the Vera CPU is designed to be the central processing unit in full-stack AI data center architectures. Its primary directive is "agentic reasoning" — a critical function for coordinating vast data movement, memory allocation, and intricate workflows across GPU-accelerated systems, all to ensure those expensive GPUs are fully utilized. This focus reveals NVIDIA's understanding that even the most powerful GPUs can be bottlenecked by inefficient CPU orchestration.

Here's why the Vera CPU's key highlights matter, and where we inject a dose of healthy skepticism:

- Optimized for AI: NVIDIA claims high efficiency for both AI training and inference, from multimodal AI agents to long-context reasoning tasks. Given NVIDIA's AI heritage, this isn't surprising, but the real-world efficiency gains will be the ultimate test.

- Performance Leap: A stated "2x the performance and efficiency gains in data processing, compression, and CI/CD compared to the prior-generation NVIDIA Grace CPU" is impressive. The Grace CPU, with its 72 Arm Neoverse V2 cores, already showed strong competitiveness against Intel Xeon and AMD EPYC in some benchmarks, particularly in efficiency. Doubling that performance for Vera, with its 88 custom "Olympus" Armv9.2 cores, positions it as a serious contender. However, we'll be watching closely for independent benchmarks to see how this translates against the absolute performance of the latest x86 flagships, especially when considering the "prior-generation" qualifier.

- Energy Efficiency: "Industry-leading energy efficiency" is crucial for large-scale data centers, where power consumption and cooling costs are enormous. Grace already excelled here, often delivering "2x more performance for the same power envelope as the competition". If Vera builds on this, it could be a major cost-saver for hyperscalers.

- Monolithic Architecture: A unified monolithic die design, according to NVIDIA, maximizes throughput, energy efficiency, and GPU utilization by avoiding cross-chiplet communication. This stands in contrast to the prevalent chiplet designs from competitors like AMD and Intel, which offer manufacturing yield and flexibility advantages by breaking larger chips into smaller, more manageable "chiplets". While monolithic designs can offer lower latency due to physical proximity, they traditionally come with higher manufacturing costs and lower yields for very large, complex dies. NVIDIA's decision here is a bold one, betting on optimized integration over modularity.

- Advanced Multithreading (NVIDIA Spatial Multithreading): Physically partitioning core resources for runtime optimization between performance and density is an intriguing approach. This could allow data centers to fine-tune resource allocation based on specific workload demands, a flexibility that's always welcome.

- Memory-Bound Workload Excellence: Vera is designed for memory-intensive tasks like agentic AI pipelines, data preparation, KV-cache management, and HPC simulations. This is a clear acknowledgment of the memory bottleneck often faced by modern AI and HPC workloads.

- High-Speed Interconnect: The second-generation NVIDIA Scalable Coherency Fabric (SCF) with 3.4 TB/s bisection bandwidth and second-generation NVLink-C2C offering 1.8 TB/s coherent bandwidth are absolutely critical. These interconnects ensure seamless, high-speed data sharing, directly addressing a common pain point in highly accelerated systems where data transfer can be a major bottleneck. Grace CPU also used SCF, providing 3.2 TB/s bisection bandwidth.

While the Vera CPU is capable of standalone operation for a variety of workloads like analytics, cloud, system orchestration, storage, and HPC, its ultimate design intent is clear: native integration with NVIDIA Rubin GPUs as part of the broader NVIDIA Vera Rubin platform. When paired, the NVLink-C2C effectively creates a unified memory system with hardware-enforced isolation, a powerful proposition for tightly coupled AI systems.

Vera CPU: Specifications That Demand Attention

The specifications of the Vera CPU speak volumes about its design goals.

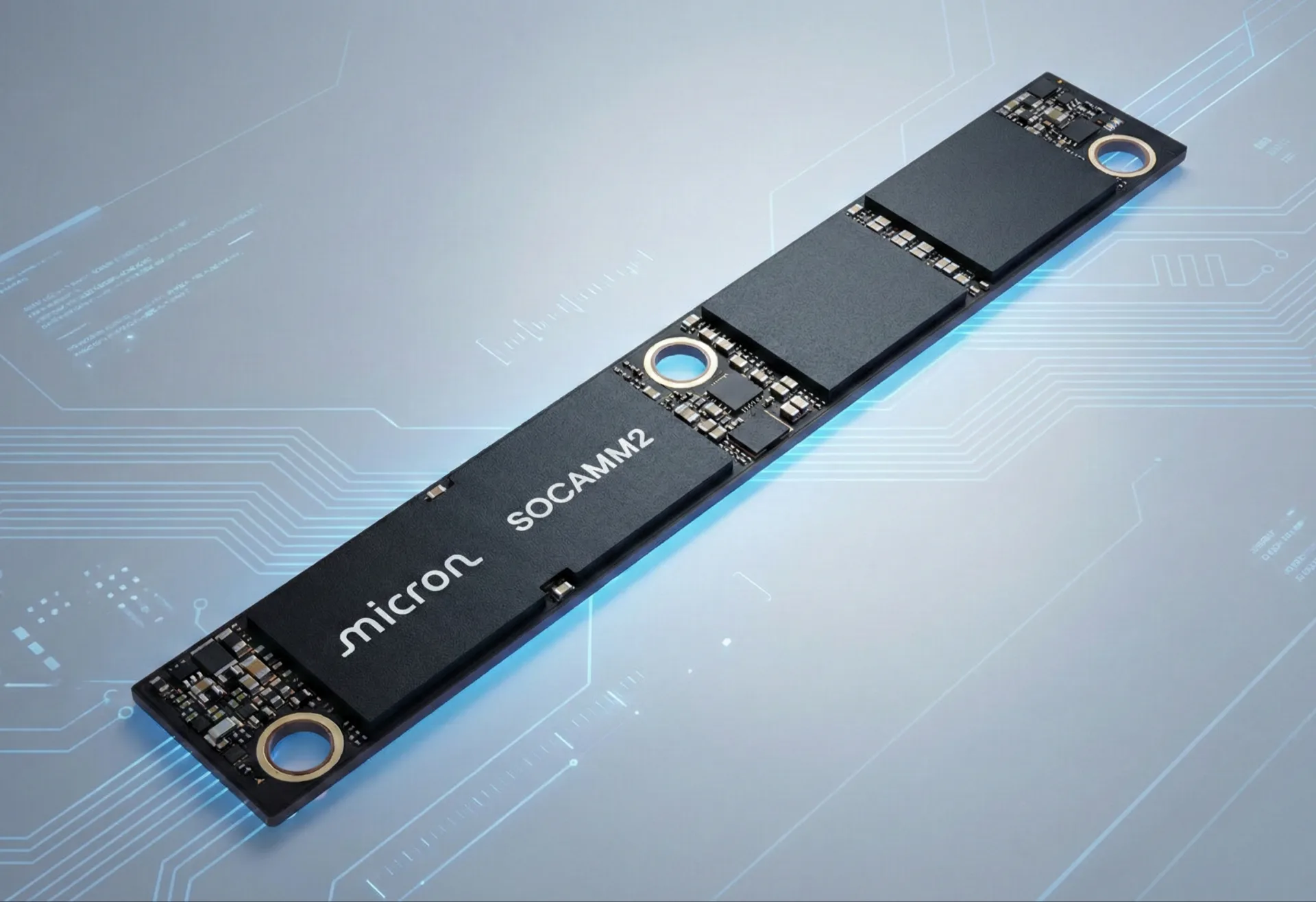

The 88 custom Arm "Olympus" cores are a significant upgrade from Grace's 72 Arm Neoverse V2 cores. Paired with 1.5 TB of LPDDR5X memory, offering up to 1.2 TB/s of bandwidth, this is a clear play for memory-intensive AI workloads. LPDDR5X, especially in a SOCAMM (Small Outline Compression Attached Memory Module) form factor, is known for its high bandwidth and superior power efficiency compared to traditional DDR5 RDIMMs, often offering 2.5x higher bandwidth and consuming significantly less power. This choice underscores NVIDIA's focus on performance-per-watt, a critical metric in power-hungry data centers. The impressive 227 billion transistors also signal a highly complex and powerful design, though we question how much of this transistor count is dedicated to pure CPU logic versus integrated interconnects and other features that facilitate the GPU synergy.

Native FP8 support is a forward-looking feature, directly catering to the precision requirements of many modern AI models. This allows certain AI workloads to run directly on the CPU with enhanced efficiency.

The Ecosystem: Adoption and NVIDIA's AI Cloud Vision

The Vera CPU is now in full production, and early adoption is key. CoreWeave is highlighted as one of the first cloud providers and customers to integrate NVIDIA Rubin-based systems, including the Vera CPU, into its AI cloud platform by the second half of 2026. NVIDIA's substantial $2 billion investment in CoreWeave further cements this partnership, suggesting a deep strategic alignment to accelerate AI infrastructure deployment. This move is not just about selling chips; it's about building out an entire AI cloud ecosystem where NVIDIA hardware is the foundation.

For hyperscale deployments, the NVIDIA DGX Vera Rubin NVL72 offers a turnkey AI infrastructure solution. This rack-scale system, integrating 36 NVIDIA Vera CPUs and 72 NVIDIA Rubin GPUs with a colossal 75 TB of total fast memory, aims to deliver "unparalleled performance for the most demanding AI workloads." It's clear that NVIDIA isn't just selling individual CPUs; they're selling tightly integrated, full-stack solutions.

It’s important to reiterate that the Vera CPU is purpose-built for AI infrastructure and data centers, not for gaming PCs or general-purpose consumer computing in the short term. While we note NVIDIA's separate plans for an Arm CPU for PCs, codenamed N1X, based on the GB10 "Superchip", the Vera is firmly aimed at the enterprise. This distinction is crucial, as the demands and optimizations for these markets are vastly different.

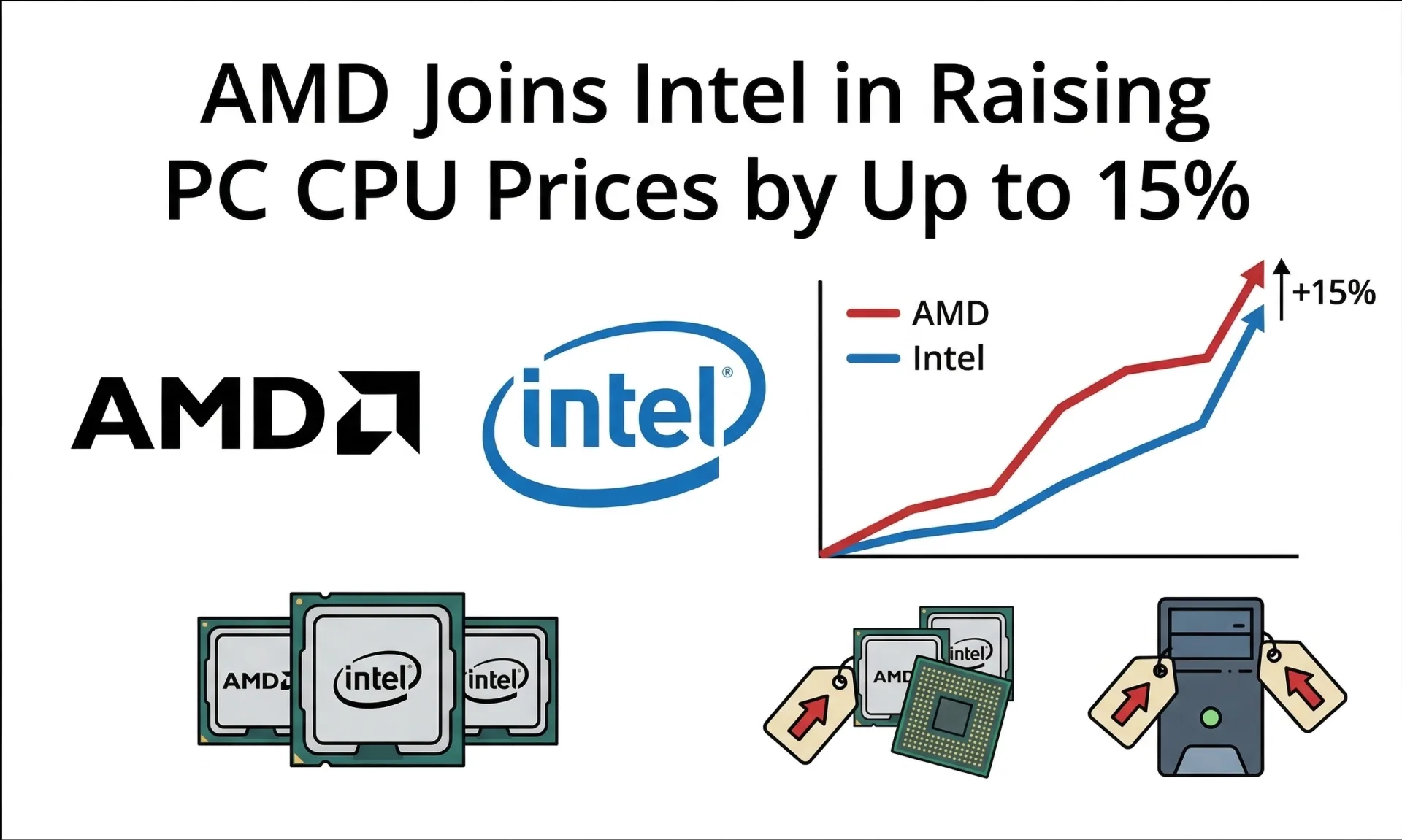

The server CPU market is certainly heating up. Arm-based server chips are gaining traction, forecast to account for around 9% of CPU revenue in 2026 and potentially 10-12% by 2027, driven by cloud providers seeking energy- and cost-efficient options. Dell'Oro Group reported Arm CPUs capturing a quarter of the server market in Q2 2025, a significant jump from a year prior, largely fueled by NVIDIA's Grace-Blackwell rack-scale platforms. While Arm's goal of 50% market share by the end of 2025 might be ambitious, NVIDIA's entry with the Vera CPU certainly adds significant weight to the Arm ecosystem. We anticipate fierce competition as Intel and AMD respond with their own innovations to maintain their dominant x86 positions. The Vera CPU is a powerful statement from NVIDIA, and we're eager to see how it reshapes the landscape of AI computing.

Comments