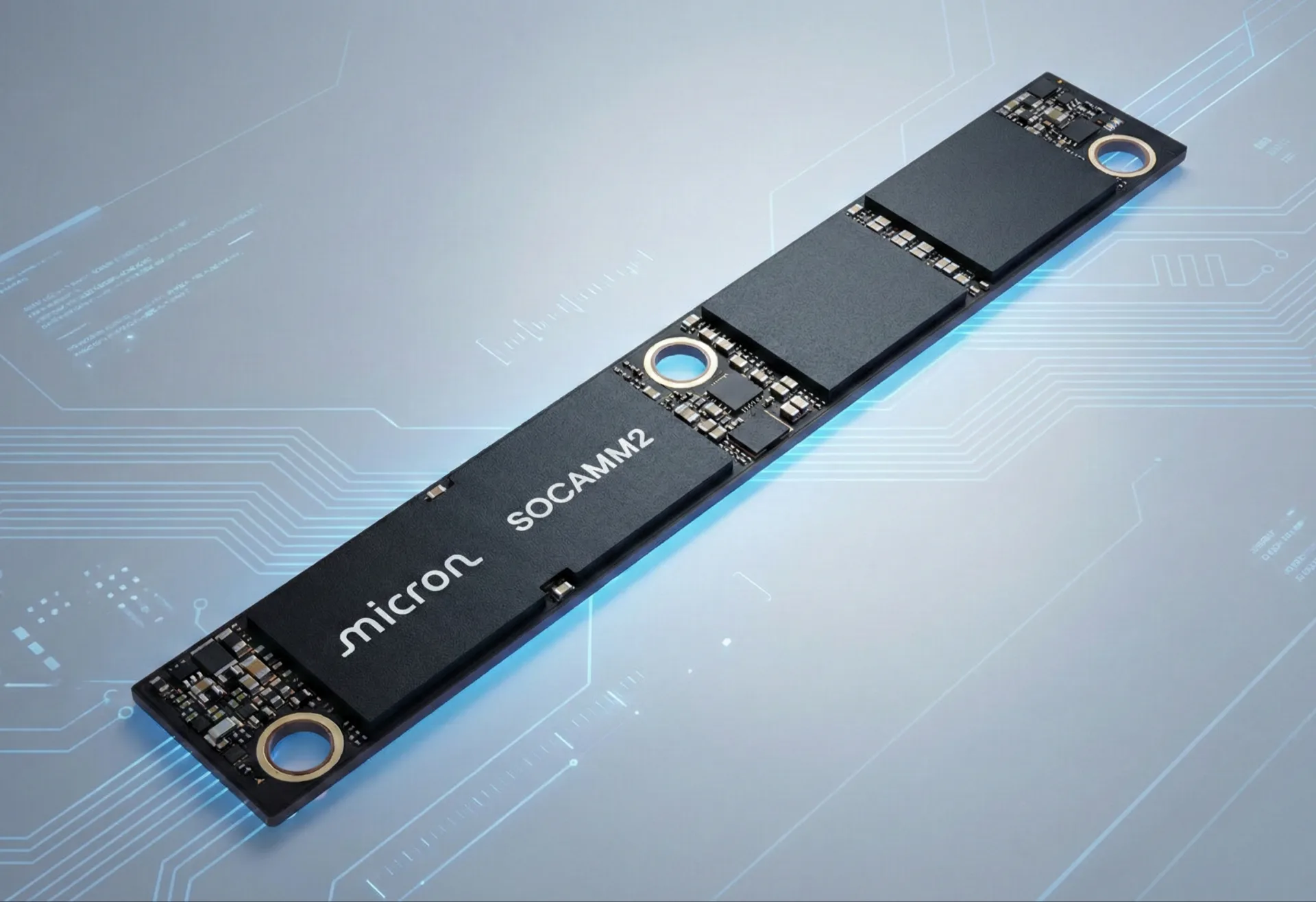

The intense competition to fuel the world's exploding artificial intelligence infrastructure is reaching a fever pitch. For years, High Bandwidth Memory (HBM) has reigned supreme as the preferred choice for cutting-edge AI accelerators. However, we're now witnessing the emergence of a new memory standard, SOCAMM2, poised to redefine the playing field. As we move through early 2026, industry whispers suggest that silicon powerhouses AMD and Qualcomm are ready to integrate SOCAMM2 into their forthcoming AI product lines. This move signals a significant shift, aiming to reshape memory capacity, power efficiency, and the long-term system economics within the fiercely competitive AI data center market.

The AI Memory Bottleneck: It's Getting Personal

For too long, the raw computational horsepower of AI accelerators grabbed all the headlines. Yet, in our view, the true hardware bottleneck for AI is rapidly evolving from raw FLOPs to memory. The proliferation of massive large language models (LLMs) and complex multimodal models (MMMs) has created an insatiable demand for immense memory capacity, exceptional power efficiency, and scalable architectures. These models require unprecedented amounts of data to reside "near" the processing units, not just for the initial training phases but, perhaps even more critically, for inference – the daily operation of AI models in real-world applications.

NVIDIA, a company that commands over 90% of the AI chip market, didn't just stumble into this. They effectively pioneered the System-on-Chip Advanced Memory Module (SOCAMM) concept, championing the SOCAMM2 standard with broad industry backing and embedding it within their Vera Rubin accelerators. While NVIDIA’s initial foray with SOCAMM1 reportedly faced several technical hurdles, forcing a significant redesign for SOCAMM2, their continued commitment underscores the standard's perceived importance. Now, it appears AMD and Qualcomm are following suit, not merely adopting the standard but reportedly exploring unique implementations that could carve out their own competitive niches.

SOCAMM2: A New Active Workspace for AI

We see SOCAMM2 as a crucial evolutionary step in memory deployment. It effectively bridges the gap between the traditional soldered LPDDR (Low Power Double Data Rate) memory, common in mobile devices, and the socketed, often less power-efficient, server DIMMs. Positioned intelligently between the ultra-fast but capacity-limited HBM and conventional system DRAM, SOCAMM2 establishes a new "Active Workspace" or "near-end memory pool" specifically for AI agents.

Here's why SOCAMM2 matters:

- Modular and Swappable: Unlike the permanently soldered LPDDR, SOCAMM2 modules are detachable. This isn't just a minor convenience; for data centers, it’s a critical factor for platform longevity, enabling easier upgrades, replacements, and enhanced serviceability. We believe this drastically improves total cost of ownership over time.

- LPDDR-based: Leveraging LPDDR5X today, with LPDDR6 on the horizon, SOCAMM2 benefits from inherently power-efficient technology. LPDDR6, in particular, promises not only significantly increased bandwidth (a projected 38.4GB/s per channel) but also around 20% lower power consumption than LPDDR5X, a crucial detail for hyperscale AI operations.

- High Capacity and Bandwidth: These modules are no slouch, with Micron already sampling units up to 192 GB. In systems like NVIDIA's GB300 NVL72, LPDDR5X-based SOCAMM2 can contribute a staggering 18TB of memory with a system bandwidth of approximately 16 TB/s. Even individual modules offer transfer speeds of 9,600 MT/s, delivering twice the bandwidth of traditional DDR5 RDIMMs.

- Energy Efficiency: By embracing LPDDR technology, SOCAMM2 inherently consumes less power than standard DRAM-based RDIMMs. This is absolutely critical for massive AI deployments where every watt contributes significantly to spiraling energy costs.

- Compact Footprint: Its design simplifies board routing and cooling in dense systems – a necessity for the high-density rack-scale AI systems dominating the market.

- JEDEC Standard: The expectation that SOCAMM2 will comply with JEDEC JESD328 is vital. This ensures interchangeability and vendor neutrality, which we anticipate will foster a healthier and more competitive supply chain, preventing any single vendor from dictating terms.

In essence, SOCAMM2 enables AI models to retain millions of tokens and long-lived states directly within the accelerator's "near-end memory pool," drastically cutting down on cross-node traffic and boosting overall system efficiency. While its throughput is acknowledged to be slower than HBM and latency can be higher than standard DDR5, we believe these limitations are often overstated. For the continuous, sustained throughput demands of many AI workloads, especially inference, these factors are often amortized and overshadowed by the capacity and power advantages.

Qualcomm's Bold Bid for Inference Dominance

Qualcomm, a long-time titan in mobile processors, officially crashed the AI data center party on October 27, 2025, with the unveiling of its AI200 and AI250 inference accelerators. These chips, slated for sale in 2026 (AI200) and 2027 (AI250), are a direct challenge to NVIDIA and AMD, with a laser focus on AI inference for LLMs and MMMs.

Qualcomm's strategy, in our analysis, is built on a few core tenets:

- Energy Efficiency and TCO: With a quoted rack system power draw of 160 kW for their liquid-cooled solutions, Qualcomm is prioritizing a low total cost of ownership. This perfectly aligns with SOCAMM2's inherent LPDDR-derived power advantages. We consider this a smart play, as energy costs are a major concern for data center operators.

- Massive LPDDR Capacity: The AI200 and AI250 cards are designed to pack up to 768 GB of LPDDR memory each. This immense capacity is ideal for handling the gargantuan context windows and parameters of modern AI models, potentially delivering what Qualcomm claims to be "more memory capacity for less money" than its competitors. We're eager to see if this claim holds true in real-world deployments.

- Innovative Memory Architecture: The AI250, in particular, boasts a "near-memory computing" design. Qualcomm claims this delivers a "generational leap in efficiency and performance" and "greater than 10x higher effective memory bandwidth" compared to current NVIDIA GPUs, alongside significantly lower power consumption. While these are ambitious claims, such an architecture would undeniably benefit from SOCAMM2's ability to act as a localized "Active Workspace." We remain somewhat skeptical of "10x" claims until independent benchmarks emerge, but the direction is clear.

- Rack-Scale Systems: Qualcomm isn't just selling chips; their offerings come as full, liquid-cooled server rack systems, capable of orchestrating up to 72 chips as a single computer. This matches the scale and ambition of NVIDIA and AMD's own rack-scale solutions.

- Unique PMIC Integration: Reports indicate Qualcomm, alongside AMD, is exploring a SOCAMM module design that incorporates power management integrated directly onto the module. This design, featuring square-shaped modules with four DRAM chips, simplifies system board manufacturing and enables tighter voltage control, which is critical for maintaining signal integrity at the high LPDDR5X/LPDDR6 data rates. This innovative approach could offer a tangible advantage in reliability and performance.

Qualcomm's early partnership with Saudi Arabia’s Humain, committing to deploy systems using up to 200 megawatts of power starting in 2026, certainly demonstrates real-world market traction for their inference-focused, LPDDR-heavy approach. This is not just a theoretical play; it's a concrete investment.

AMD's Strategic Position and OpenAI's Shadow

While AMD's specific SOCAMM2 adoption plans remain less granular than Qualcomm’s at this stage, reports suggest they are also integrating it into their upcoming AI product lines. This makes immense strategic sense, given their direct competition with NVIDIA. AMD already possesses a robust portfolio of server CPUs and GPUs for AI, and integrating SOCAMM2 would undeniably enhance their memory capacity and speed offerings – a crucial differentiator for competitive performance.

We anticipate AMD’s interest mirrors Qualcomm’s: leveraging LPDDR for superior power efficiency and increased capacity. They, too, may adopt the on-module PMIC design for similar benefits. The broader competitive landscape is also significant. OpenAI's reported plans to not only buy chips from AMD but potentially take a stake in the company could provide AMD with considerable momentum in the AI chip market, putting further pressure on NVIDIA’s seemingly unassailable dominance. We believe this potential alliance could be a true accelerant for AMD, challenging the duopoly narrative of AI hardware.

The Broader Implications: A Data Center Showdown

The anticipated embrace of SOCAMM2 by AMD and Qualcomm signals a united industry move toward optimized memory solutions for the exploding AI market. With the AI server sub-market value projected to soar to $298 billion by 2025, accounting for over 70% of the total server industry, the stakes couldn't be higher.

This shift, however, is not without its hurdles:

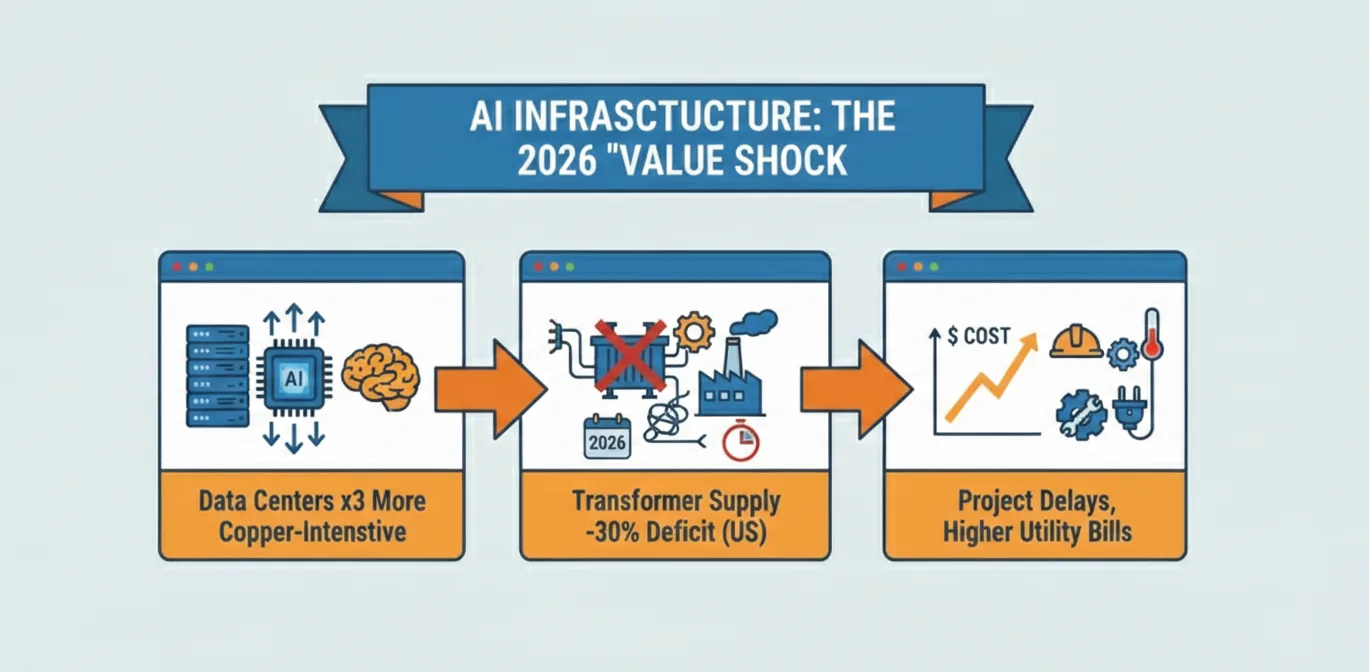

- Thermal Management: Packing numerous LPDDR devices into a compact module inevitably creates significant heat density challenges. We believe thermal durability remains a key concern for LPDDR-based AI chips like Qualcomm's. Direct liquid cooling, as observed in Qualcomm's rack systems, is rapidly evolving from a niche solution to a fundamental necessity.

- LPDDR6 Development and Costs: While promising, LPDDR6 is currently still in the technical verification stage. Uncertainties surrounding compatibility, mass production costs, and long-term stability after large-scale application persist. The industry needs LPDDR6 to materialize efficiently for SOCAMM2 to fully hit its stride.

- Commercialization Hurdles: SOCAMM2’s high transfer speed and specialized instruction sets may necessitate hardware upgrades for older server memory controllers, potentially creating a slower adoption curve for some players. Moreover, the R&D and manufacturing costs for SOCAMM2 modules, compared to traditional DDR5, mean its true value proposition rests on the total system economics rather than just the individual module price.

- NVIDIA's Ecosystem Lock-in: The most significant challenge for any new AI chip entrant remains NVIDIA's formidable CUDA software ecosystem. This deeply entrenched platform creates a powerful lock-in effect for developers and existing infrastructure. Alongside this, ensuring technical compatibility with existing orchestration tools will be critical for AMD and Qualcomm to woo customers away from the incumbent.

Despite these challenges, the multi-vendor momentum behind SOCAMM2 – including NVIDIA, Samsung, SK Hynix, Micron, and Foresee (with a customized version for the Chinese market) – indicates a strong collective belief in its long-term potential. While volume availability for SOCAMM2 isn't expected until early 2027, the groundwork being laid by Qualcomm and AMD in early 2026 unquestionably sets the stage for a dramatic reshuffling of the AI memory hierarchy.

We believe SOCAMM2 is laying the groundwork for a more sustainable, scalable, and serviceable foundation for the AI-driven future. As the AI data center continues its rapid expansion, the fight for memory dominance, now featuring SOCAMM2, will undoubtedly shape the next generation of artificial intelligence.

Comments