As we settle into early 2026, the global hardware and consumer technology industry isn't just evolving; it's undergoing a seismic shift. Artificial Intelligence isn't merely a buzzword this year; it's the foundational force behind a torrent of innovation, investment, and, perhaps most critically, a tangible reshaping of how technology integrates with our physical world. This isn't a gentle progression, but what we're seeing described as a "value shock" – a high-stakes re-architecting of our digital and physical realities that demands specialized engineering and expansive infrastructure to even begin to keep pace.

The Edge of Intelligence: AI's On-Device Revolution Takes Hold

The most compelling narrative unfolding in 2026 is undoubtedly the determined migration of AI from the sprawling, distant confines of cloud servers to the very devices and environments we interact with daily. This isn't just about convenience; it's about necessity. Moving AI to the edge promises ultra-low latency, crucial for real-time applications like augmented reality filters or snappy biometric authentication, where instantaneous responses are non-negotiable. Furthermore, on-device AI offers rock-solid privacy, ensuring sensitive data never leaves the device, a significant win for user trust and regulatory compliance. It also enables crucial offline functionality, meaning AI features work seamlessly even without an internet connection, reducing data transfer costs and enhancing personalization.

NVIDIA's Blackwell platform stands as a prime example of this paradigm shift. Designed explicitly for AI's expansion into the physical world, Blackwell isn't just an incremental update. It's equipped with dedicated hardware for transformer inference, video processing, and sustained power delivery, enabling operations in milliseconds. Its ability to process continuous sensor streams and make real-time decisions in physical spaces, where failure carries real-world consequences, highlights AI's growing demand for intelligence that can "see, manipulate, and operate" in our environment. When we compare it to its predecessor, Hopper, Blackwell boasts a remarkable 2.5x performance boost, packing 208 billion transistors compared to Hopper's 80 billion. It features a second-generation Transformer Engine, a dedicated decompression engine, and a significantly faster 1.8 TB/s NVLink interconnect, doubling Hopper's 900 GB/s. This platform is explicitly optimized for generative AI and handling trillion-parameter models, a clear departure from Hopper's broader HPC and AI focus.

This ambitious move to the edge is fueling what some analysts are calling the "most significant hardware upgrade cycle in decades." While that might sound like marketing hyperbole, the underlying demand for specialized AI PCs — devices purpose-built to harness these advanced AI capabilities directly — suggests a genuine inflection point. These machines aren't just faster; they demand neural processing units (NPUs) that are orders of magnitude more powerful, sophisticated multi-sensor arrays, advanced thermal management, and battery technology capable of continuous processing.

AMD is making a strong play in this space with its Ryzen AI 400 Series processors, launching across major OEM manufacturers in Q1 2026. These new chips leverage "Zen 5" CPU cores, RDNA 3.5 graphics, and XDNA 2 neural processing units, bringing a new era of computing to both consumers and businesses. The highest-end Ryzen AI 9 HX 475 chip is slated to deliver up to 60 Trillions of Operations Per Second (TOPS), with other chips in the series starting at 50 TOPS. This marks a notable jump from AMD's previous generation's maximum of 50 TOPS. For context, AMD claims its Ryzen AI 400 Series will offer 1.7 times faster content creation and 1.3 times faster multitasking compared to Intel's Core Ultra 9 288V processors.

Not to be outdone, Intel unveiled its Core Ultra Series 3 processors at CES 2026, built on its cutting-edge 18A process technology. These chips are set to power over 200 PC designs from major global partners, with pre-orders for the first consumer laptops beginning in January 2026. The top SKUs in the Core Ultra Series 3 mobile lineup feature up to 16 CPU cores, 12 Xe-cores, and 50 NPU TOPS. Intel boasts impressive performance figures, claiming up to 1.9 times higher large language model (LLM) performance and up to 4.5 times higher throughput on vision language action (VLA) models. Additionally, they project up to 60% better multithread performance and over 77% faster gaming performance compared to their "Lunar Lake" predecessors.

While AMD's 60 NPU TOPS in its top-tier Ryzen AI 400 series slightly edges out Intel's 50 NPU TOPS in raw AI compute, both companies are trailing Qualcomm's formidable 80 TOPS claim for its upcoming Snapdragon X2 Elite processors. In our view, the raw TOPS numbers are important, but real-world application performance and software ecosystem integration will ultimately dictate which platform truly resonates with users. Intel still tends to hold a slight advantage in single-core performance, which can be noticeable in moment-to-moment interactions, while AMD often delivers better value for multi-threaded workloads and integrated graphics at similar price points.

Gartner forecasts that AI PCs will command a remarkable 55% market share in 2026, a sharp increase from 31% in 2025, signaling a rapid embrace of on-device AI. This surge isn't necessarily driven by a "killer app" yet, but rather by users preparing for the growing integration of AI at the edge, and the simple fact that it will become increasingly difficult to buy a PC without AI silicon. The larger question remains: can vendors effectively monetize these AI PCs, or will the NPU become just another expected, undifferentiated feature? We also note Gartner's observation of a divide in demand: Arm-based laptops are expected to gain a larger share in the consumer market, while businesses continue to prefer x86 on Windows, which is projected to make up 71% of the AI business laptop market in 2025.

Here's a quick look at the NPU compute power from the major players:

Powering the AI Machine: The Unseen Infrastructure Demands

The relentless proliferation of AI, whether operating at the edge or deeply embedded in the cloud, is creating an insatiable demand for the foundational infrastructure that makes it all possible. This year, our attention is drawn to the bedrock elements: specialized chips, high-bandwidth memory, colossal storage, and the often-overlooked yet utterly crucial power and cooling systems. Without these, the grand visions of AI remain just that – visions.

Hyperscaler capital expenditure (capex) projections for 2026 have been revised upward, now reaching approximately $600 billion, with upside scenarios pushing toward an astonishing $700 billion. This represents a staggering 36% year-over-year increase. While much of the investment in 2024 poured into GPUs and semiconductors, 2026 is expected to see a shift closer to a 50/50 split as passive infrastructure – the pipes, wires, and chillers – finally catches up to the demands of these power-hungry systems.

- Memory and Processors: Micron Technology has already secured agreements for its entire calendar 2026 supply of HBM (High-Bandwidth Memory), a component absolutely critical for AI accelerators. This highlights the intense, almost frantic, demand for this specialized memory. AMD is further strengthening its AI data center presence with the deployment of MI450 GPUs beginning in H2 2026, a move bolstered by a key partnership with OpenAI.

- Storage Solutions: Seagate Technology is directly addressing the surging AI-driven data storage demand with its 32TB hard drives (Exos, SkyHawk AI, IronWolf Pro portfolios). Production for these high-capacity drives is fully committed through calendar 2026. Its innovative Mozaic HAMR technology is already qualified with five major cloud providers and is targeting three more by mid-2026, particularly for the compute-intensive demands of video analytics.

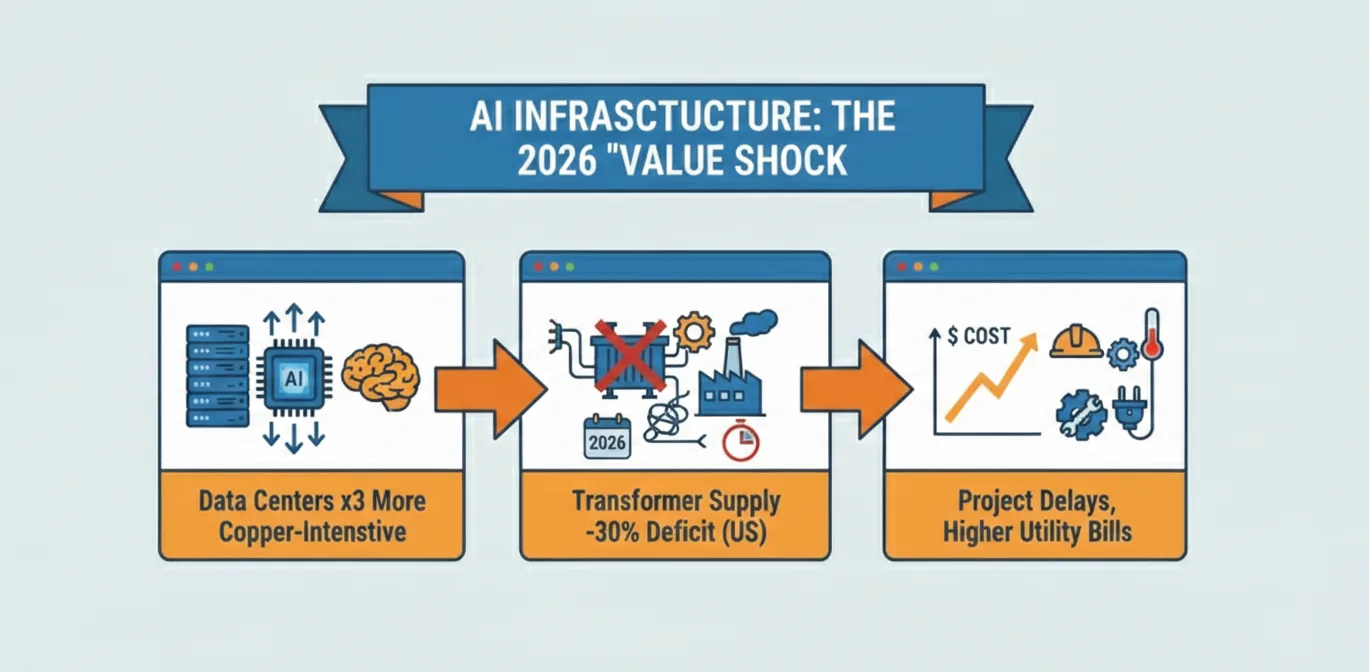

- The "Value Shock" of Infrastructure: The 2026 AI cycle is correctly characterized as a "value shock" – a high-intensity, precision industrial boom focused heavily on electrical engineering, advanced thermal management, and sophisticated semiconductor packaging. AI data centers are proving to be two to three times more copper-intensive than traditional ones due to higher power density and the widespread shift to liquid cooling solutions. This is creating entirely new manufacturing verticals and elevating companies like Vertiv and nVent into key players as rack density skyrockets from 10kW to an astounding 100kW. TSMC, the linchpin for advanced chip fabrication, is rapidly expanding its CoWoS advanced packaging capacity, which is already fully booked through 2026. This intricate dance of hardware innovation and infrastructure buildout makes it abundantly clear just how deeply AI has reshaped the hardware industry, demanding a complete rethinking of how we power and cool our digital future.

Economic Currents: Growth Amidst Global Shifts

Against a backdrop of projected global GDP growth of 2.7% and consumer spending growth of 2.4% in 2026, the computer hardware market is demonstrating strong expansion. The global computer hardware market, valued at USD 130.86 billion in 2022, is projected to reach USD 164.0 billion in 2026, reflecting a Compound Annual Growth Rate (CAGR) of approximately 7.86% from 2020-2034. The U.S. Consumer Technology Industry alone is projected to reach $565 billion in revenue in 2026, with hardware revenues expected to grow 3.4%.

This growth, we believe, is fundamentally driven by the increasing adoption of cloud computing, the relentless expansion of big data analytics, widespread digital transformation initiatives, the proliferation of smart devices and IoT, and the enduring prevalence of remote and hybrid work models. We anticipate the server market and storage devices will experience the highest growth rates, particularly in North America and Asia-Pacific, fueled by rising data volumes and expanding cloud-based services. Business investment, especially in the massive AI infrastructure buildout, is accelerating, effectively offsetting any potential slowdown in consumption. Intriguingly, small businesses are adopting generative AI faster than consumers, with AI-integrating firms showing significantly higher transaction growth. This suggests that practical, business-oriented AI applications are finding immediate traction, moving beyond the consumer novelty phase.

Key Players and Strategic Investments

The computer hardware industry remains moderately concentrated, with major multinational corporations vying for market share. Leading players such as NVIDIA, AMD, Seagate, Micron, IBM, Acer, Samsung Electronics, HP, Cisco Systems, Dell Technologies, Lenovo Group, Apple, Panasonic, Intel, and Sony are all navigating this dynamic market.

Strategic investments across the industry clearly reflect its intense focus on AI. Micron Technology, for instance, has planned a substantial $20 billion capital investment for fiscal 2026, which includes groundbreakings on a New York megafab and a second Idaho facility. Its strategic exit from consumer products allows a sharper focus on high-growth data center segments like HBM, a decision we view as shrewd given the current demand. IBM stands to benefit from strategic initiatives, including the pending $11 billion Confluent acquisition, expected to boost adjusted EBITDA within the first year. This is coupled with their January announcement of IBM Sovereign Core, aimed at addressing surging digital sovereignty demands for AI workloads — a timely move in an increasingly regulated global landscape. AMD is expanding its software reach, with its ROCm software platform having doubled compatibility in 2025, broadening developer accessibility. The widespread adoption of the multi-cloud model is also expanding opportunities for industry participants, as businesses seek greater scalability and optimized resource utilization.

Navigating the Bottlenecks: A Critical Look

Despite the immense opportunities, the industry faces significant hurdles that we cannot overlook. Persistent supply chain constraints and fluctuating component prices remain critical challenges. The "value shock" of AI infrastructure itself reveals acute bottlenecks: U.S. transformer supply deficits are projected to hit 30% in 2026, with lead times extending to an alarming three to six years. This situation threatens to create a "capex air pocket" if permitting delays and grid connection queues prevent crucial capital deployment, which could significantly slow the AI buildout.

Geopolitical instability casts a long shadow over the entire enterprise. The concentration of advanced semiconductor manufacturing in Taiwan represents the single greatest supply chain vulnerability for AI chips; a Taiwan Strait conflict could, in our view, halt the entire AI infrastructure cycle overnight. Moreover, the physical AI buildout creates acute dependencies on commodities like rare earth elements, lithium, and cobalt, where China dominates global supply chains, posing significant strategic vulnerabilities. The U.S. is racing to secure domestic chip production as AI demand explodes, creating a scenario where maximum dependency on Taiwan coincides with maximum geopolitical tension – a truly precarious position.

Other challenges include intense competition, evolving consumer preferences, stringent environmental regulations, and the burden of rising costs, which unevenly impact smaller companies. Macroeconomic factors like rising inflation (projected to ease from 3.4% in 2025 to 3.1% in 2026, but still a factor) and higher interest rates also temper the outlook, adding layers of financial complexity to an already demanding environment.

The Deeper Game: Implications for a Transformed World

The 2026 outlook points to far more than just technological advancements; it signals a deeper economic and industrial transformation. The shift to AI in the physical world and at the edge is fundamentally altering how industries operate, from manufacturing and logistics to healthcare and the very fabric of our smart cities. The "Intelligent Transformation," identified by the Consumer Technology Association (CTA) as a major force for 2026, signifies that AI is becoming foundational, woven into the core processes of nearly every sector.

The urgent need for specialized hardware, from NVIDIA's Blackwell platform to AMD's MI450 GPUs and the new wave of AI PCs, clearly highlights a new era of computing. The colossal investments in data center infrastructure, advanced packaging, and novel cooling solutions signal a fundamental reorientation of capital toward these critical, often hidden, components. The geopolitical risks make it undeniably clear that this technological race carries profound strategic implications, forcing nations to consider domestic production and supply chain resilience as matters of national security.

This is a period where innovation in processing power, memory capacity, and miniaturization remains critical, but it's increasingly complemented by an urgent focus on energy efficiency, advanced thermal management, and robust physical integration. The drive for digital sovereignty, as addressed by initiatives like IBM Sovereign Core, further adds layers of complexity to this global buildout, shaping not just what technology is developed, but where and by whom.

Conclusion: Beyond the Hype, Into Reality

As February 2026 progresses, the global hardware and consumer tech industry is clearly riding an AI-driven wave that promises to reshape economies and daily lives. The projections for growth are strong, fueled by accelerating business investment and a clear, undeniable demand for more intelligent, responsive, and physically capable technologies. However, this sweeping period of change is not without its risks. The intricate web of global supply chains, the ever-present geopolitical tensions, and the sheer scale of the required infrastructure buildout present serious challenges that industry leaders and policymakers must navigate with foresight and agility.

The coming year will be less about the abstract potential of AI and more about its concrete manifestation – not just in your next PC, but in the data centers powering autonomous systems, and ultimately, in the very fabric of our increasingly intelligent world. The hardware revolution isn't just coming; it's already here, building the very foundations for a future where intelligence is truly ubiquitous.

Comments