Just four months ago, the tech world was practically giddy. Nvidia CEO Jensen Huang, joined by OpenAI's Sam Altman and Greg Brockman, unveiled what was touted as a massive $100 billion deal in September 2025. The memorandum of understanding (MoU) painted a picture of Nvidia pouring this colossal sum into helping OpenAI build an unprecedented 10 gigawatts of computing power—a scale roughly equivalent to the output of ten nuclear power plants. Market analysts celebrated, Nvidia's stock soared, and the AI 'gold rush' seemed to be reaching its speculative peak.

But today, that audacious vision feels largely like a distant memory, if not entirely frozen. Jensen Huang has now confirmed Nvidia will invest in OpenAI, yet he's significantly walked back the initial promise, stating the amount would be "less than $100 billion but 'probably the largest investment we've ever made.'" This public concession merely confirms what industry insiders have been whispering for months: the original, ambitious megadeal for 10 gigawatts of infrastructure never moved past its preliminary stages. Both AI giants have clearly been forced to rethink its scope and terms, and frankly, we're not surprised.

So, what triggered this dramatic recalibration, and what does it truly mean for the future of AI development and infrastructure, beyond the hype?

The Grand Ambition Meets Harsh Reality (and Lingering Questions)

The September 2025 announcement came hot on the heels of OpenAI's tentative $100 billion equity agreement with Microsoft. This painted a picture of an AI titan rapidly consolidating immense resources on its path to superintelligence. Sam Altman even suggested the new Nvidia-built data centers would be in addition to previously announced projects, underscoring what seemed to be OpenAI's insatiable hunger for compute.

However, from the outset, the deal was only a memorandum of understanding, not a definitive agreement—a crucial detail Jensen Huang privately emphasized at the time. Nvidia's own November 2025 filing and CFO Colette Kress in December 2025 explicitly stated there was no assurance of definitive agreements. This cautionary language was a sharp contrast to the initial fanfare, hinting at underlying complexities that were perhaps overlooked in the rush of the announcement.

Nvidia's Strategic Reassessment: More Than Just Cold Feet

Nvidia's hesitation appears to stem from a confluence of strategic and financial concerns, suggesting a calculated pullback rather than outright abandonment.

- "Lack of Discipline": Jensen Huang reportedly criticized OpenAI's business approach as a "lack of discipline." This likely refers to OpenAI's astronomical spending on computing, putting it on the hook for an estimated $1.4 trillion in commitments—more than 100 times its 2025 revenue. Microsoft's reported $350 billion loss on AI spending the same week the deal stalled certainly adds weight to such concerns, making OpenAI's burn rate a genuine point of contention.

- Diversifying Bets: While OpenAI remains one of Nvidia's largest customers, the chipmaker is keenly aware of the growing competitive field. Huang is concerned about the growth of OpenAI's rivals, including Google's Gemini (which has slowed ChatGPT's growth, triggering a reported "code red" at OpenAI) and Anthropic, whose Claude Code is also putting pressure on OpenAI. Nvidia has been actively diversifying its investments, committing up to $10 billion into Anthropic in November 2025 and an additional $2 billion into CoreWeave Inc. This strategy mitigates risk and fosters competition among AI developers, which ultimately drives demand for Nvidia's GPUs across a broader customer base, rather than solely relying on one potentially overextended player.

- "Circular Deals" Concern: Insiders at Nvidia reportedly worried about the original deal's size, terms, and the optics of "circular deals," where Nvidia invests heavily in a customer who then uses that capital to buy Nvidia chips. We believe this concern is valid, especially as prominent investors like Peter Thiel, SoftBank, and Michael Burry have already begun pulling back from Nvidia due due to warnings of an "AI bubble". Thiel, for instance, fully exited his Nvidia stake in Q3 2025, while Burry has placed bearish put options against Nvidia, and SoftBank sold its entire $5.8 billion Nvidia stake, in part to finance other AI investments including OpenAI. Such high-profile exits and bearish positions underscore a market wary of artificial demand.

- Financial Prudence: Nvidia's $500 billion revenue forecast notably excluded any potential revenue from the OpenAI deal, indicating that the company was not banking on its completion in its original form. While OpenAI is attempting to raise up to $100 billion in its current funding round, Nvidia's current discussions are focused on a smaller equity investment as part of that round, reflecting a more cautious and financially sound approach.

OpenAI's Quest for Compute and Independence: A Multi-Front Battle

OpenAI's journey over the past year (2025) has been a relentless sprint to secure vast computing capacity. Beyond the Nvidia deal, it has inked gargantuan cloud infrastructure commitments: a $38 billion, seven-year partnership with Amazon AWS, a renegotiated $250 billion commitment to Microsoft Azure for multi-cloud freedom, and roughly $300 billion with Oracle over five years, alongside a partnership with Google Cloud. These collective commitments, totaling around $1.4 trillion, have understandably drawn investor jitters about OpenAI's ability to pay, despite Sam Altman asserting that Microsoft and Nvidia are "passive investors" and that OpenAI's nonprofit board controls the company.

Adding another layer to OpenAI's strategy is its ongoing effort to develop its own AI chips. This move signals a clear intent to reduce its dependence on Nvidia, a crucial step for long-term cost control and supply chain resilience. This desire for self-sufficiency, coupled with massive existing cloud commitments, likely made the sheer scale of the original Nvidia infrastructure deal less appealing, or even redundant, in its initial form.

The competitive picture is also shaping OpenAI's needs. While GPT-5.x is its consolidated flagship, the rise of Google's Gemini, Anthropic's Claude Code, and breakthroughs like DeepSeek-V3—which demonstrates frontier-level performance at a fraction of compute cost, potentially reducing infrastructure spending by 30-50%—mean OpenAI must be efficient and adaptable, not just expansive. The era of simply throwing more hardware at the problem might be drawing to a close.

Broader Implications for the AI Industry: The Reality Check

The scaling back of the OpenAI-Nvidia megadeal, even if a significant but smaller investment proceeds, sends clear signals across the AI industry. We believe this represents a critical inflection point, moving from unchecked ambition to a more sober assessment of economic realities.

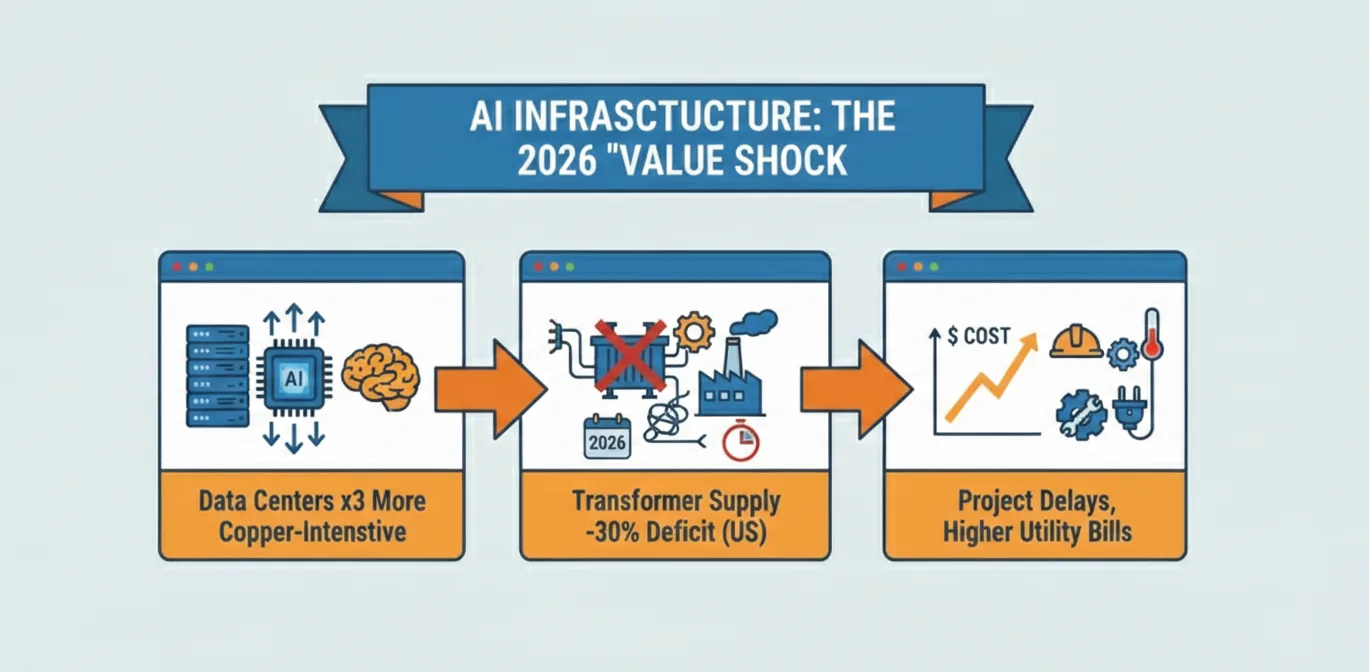

- Reckoning on AI Infrastructure Costs: The sheer scale of AI training and deployment has led to record-breaking capital expenditure. IBM CEO Arvind Krishna's warning at Davos in January 2026 of an industry "depreciation trap" resonates deeply with the current situation. Krishna estimates that outfitting a single 1-gigawatt AI data center costs roughly $80 billion, and with the industry aiming for 100 gigawatts, the total bill could reach $8 trillion, requiring $800 billion in profits just to cover interest. He points out that unlike traditional infrastructure, AI accelerators have a functional competitive lifecycle of only five years, meaning the $8 trillion investment needs to be "refilled" constantly. The industry is being forced to reassess whether endless scaling with ever-more expensive GPUs is the only viable path.

- Diversification of Compute: OpenAI's multi-cloud strategy and its internal chip development reflect a broader industry trend towards diversifying compute resources. Companies are no longer solely beholden to one chip manufacturer or cloud provider, exploring alternatives like AWS's Trainium chips and Google's in-house TPU processors (heavily relied upon by Anthropic for training, and Google for Gemini). This fosters a more competitive and resilient infrastructure market, which is a welcome development in our view.

- Nvidia's Enduring Dominance, but with Nuance: While the original $100 billion infrastructure deal is off, Nvidia remains central to OpenAI's operations. OpenAI explicitly stated that Nvidia technology "powers their systems today, and will remain central for future scaling." Nvidia's commitment to invest, albeit a smaller sum, confirms its vested interest in OpenAI's success. This highlights Nvidia's strategy of being an investor and a supplier, backing multiple horses in the AI race while still maintaining its core business strength, reflected in its comparatively healthy forward P/E multiple of 27x.

- A Maturing AI Investment Market: The initial "move fast and break things" mentality, particularly regarding infrastructure spending, is giving way to more cautious, strategically diversified investments. The shift from a speculative, all-encompassing infrastructure deal to a more focused equity investment reflects a maturing market where profitability, sustainable growth, and risk management are increasingly critical, especially as OpenAI prepares to go public by the end of 2026.

The OpenAI-Nvidia megadeal's transformation from a colossal infrastructure project to a more measured equity investment signifies a critical inflection point for the AI industry. It highlights a growing awareness of cost, competition, and strategic independence, suggesting that the future of AI infrastructure may be more diversified, efficient, and ultimately, more sustainable. The initial euphoria may have cooled, but the strategic chess game for AI dominance is just getting started.

Comments