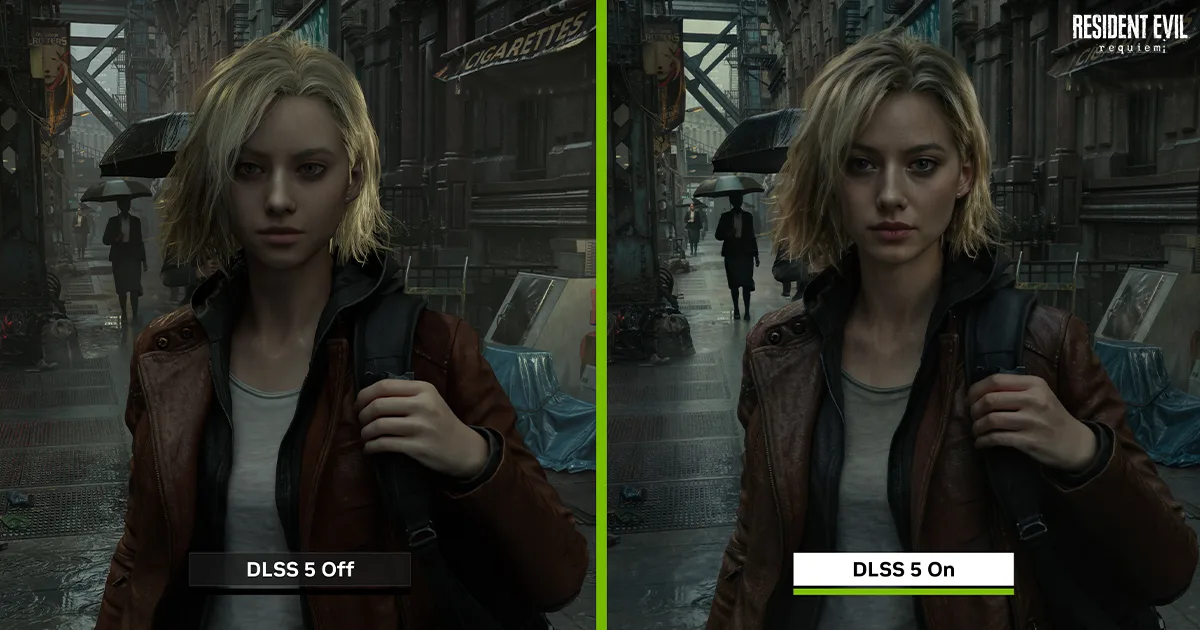

On March 16, 2026, NVIDIA debuted DLSS 5, triggering a reaction split between awe and immediate skepticism. This is no longer a matter of simple upscaling or interpolating frames to smooth out performance. NVIDIA is positioning DLSS 5 as "content-control generative AI," a shift that fundamentally alters the relationship between a game engine and the final image on a monitor.

This is the most aggressive move NVIDIA has ever made to decouple visual quality from local hardware rendering. By moving away from 3D data and relying on 2D inference, NVIDIA is effectively replacing the traditional rendering pipeline with a generative model that "guesses" what a photorealistic world should look like.

A Massive Disconnect in Technical Strategy

The most striking aspect of the DLSS 5 announcement is the disconnect between NVIDIA’s leadership and its technical staff. During the unveiling, CEO Jensen Huang claimed that DLSS 5 functions at the "geometry level." However, NVIDIA spokesperson Jacob Freeman later clarified that the system is actually blind to 3D geometry, depth buffers, and material maps. Instead, it takes a single 2D rendered frame and motion vectors as its only inputs.

This distinction matters. If the AI cannot see the geometry, it isn't "enhancing" the game in the traditional sense; it is interpreting a flat image and redrawing it. This shift is concerning because the GPU is no longer concerned with the developer's raw data. It is looking at a picture, recognizing a character's face or the texture of a jacket, and using its training data to "infuse" that image with what it thinks is better lighting and material detail.

DLSS 5 vs. Previous Generations

Understanding this departure from the norm requires looking at what the AI is actually doing compared to the DLSS versions currently running on RTX 40 or 50-series cards.

By operating entirely in screen space, DLSS 5 has no awareness of anything outside the visible frame. This creates a disconnect: the game engine knows there is a light source behind a door, but DLSS 5 only knows what it can see. This explains the technical artifacts visible in early preview footage, such as ghosting and flickering shadows that struggle with consistency.

The Problem of AI Hallucinations

AI "hallucinations" have plagued LLMs and image generators for years, but seeing them in real-time gaming is a new frontier. Because DLSS 5 is trained to recognize "semantics" like hair, fabric, and translucent skin, it often takes liberties with the source material.

In early previews of Starfield, critics noted that characters appeared with extra hair detail or altered facial features that were absent from the original character model. More alarming are reports of the AI adding makeup to characters in grim, post-apocalyptic settings—effectively overwriting the artistic intent of the developers.

For developers, this presents a nightmare for quality control. There is currently no direct method to correct specific AI-generated artifacts. If the AI decides a character should have a different nose shape or a shinier coat, the developer is limited to tweaking global sliders like intensity, contrast, or gamma. This lack of granular control will be a major sticking point for studios that pride themselves on specific aesthetic identities.

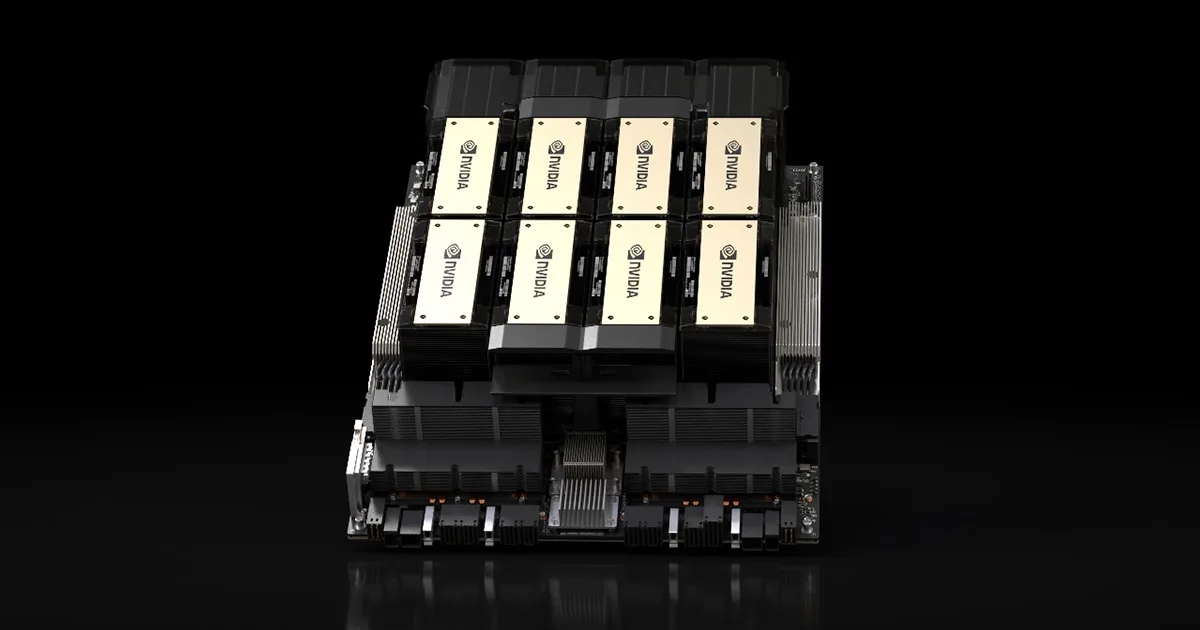

Real-Time Neural Rendering Requirements

While NVIDIA is pushing the fall 2026 consumer release, the hardware requirements are currently astronomical. Early previews reportedly required two RTX 5090 cards running in tandem to maintain smooth operation. This suggests that "real-time neural rendering" is still far from becoming a mainstream feature.

Despite the heavy hardware tax, the industry is already lining up. Major publishers like Capcom, Ubisoft, and Warner Bros. Games are integrating the technology into upcoming titles like Resident Evil Requiem and Assassin’s Creed Shadows. The integration uses the NVIDIA Streamline framework, which should theoretically make it easier to add to existing titles, but we question whether these visual alterations are worth the loss of artistic accuracy.

TTEK2 Verdict

DLSS 5 is a bold, perhaps reckless, attempt to turn your GPU into a real-time Deepfake machine. By ignoring 3D geometry and "interpreting" 2D frames, NVIDIA is prioritizing perceived photorealism over technical accuracy and artistic intent.

Practical Takeaways:

- For Enthusiasts: Don't expect to run this comfortably on mid-range hardware. Even the RTX 5090 seems to sweat under the load of DLSS 5's neural rendering.

- For Purists: This technology is a red flag. If you care about seeing exactly what the developers built, the "hallucinations" and inferred materials of DLSS 5 will likely frustrate you.

- For the Industry: This marks a shift where NVIDIA is no longer just helping games run faster; they are taking a seat in the director's chair. Developers must now decide if they will tolerate surrendering control over their character designs to an algorithm.

DLSS 5 feels like a solution in search of a problem. We want our GPUs to render games, not "re-imagine" them. Until NVIDIA provides developers with a way to tether the AI to the actual 3D assets, this technology risks turning every game into a generic, AI-filtered version of itself.

Comments