The AI Gold Rush: Why Your Next PC Upgrade Will Cost a Fortune

The AI revolution is here, and while we're often told it will enhance our lives, there's a silent crisis unfolding in the background that’s already hitting our wallets. Ordinary DRAM—the memory that powers every PC, server, and consumer device—is facing severe shortages. Why? Because manufacturers are abandoning it en masse, pivoting their entire focus towards the insatiable, high-margin demands of Artificial Intelligence. This isn't just a kink in the supply chain; it's a structural realignment, and we, the everyday consumers and businesses, are paying the price.

The Memory Market's Seismic Shift

For decades, the DRAM market operated on a predictable boom-and-bust cycle. Manufacturers would adjust production based on steady, broad-based demand from PCs, servers, and gadgets. That stability has been utterly shattered. Today, a mere three companies—Samsung, SK Hynix, and Micron—control a staggering 90–95% of the global DRAM supply, a concentration that creates a systemic vulnerability, ripe for exploitation. And exploit it they have.

The shift isn't just about increased demand; it's about the kind of demand. AI accelerators like NVIDIA's H100 and AMD's MI300X are hungry for High-Bandwidth Memory (HBM), a specialized DRAM variant that boasts 3–5x higher margins than commodity DDR5/DDR4. Faced with such a lucrative incentive, these dominant manufacturers have deliberately reallocated wafer capacity away from commodity DRAM to prioritize HBM production. As one industry insider succinctly put it, "The market isn't broken—it's following the money. And the money is in AI.". The result is a market that was designed for broad-based demand now singularly focused on the AI gold rush, leaving everything else struggling in its shadow.

We view this not as a temporary blip, but a fundamental, qualitative shift in the memory industry. The same factories that once produced memory for our laptops are now dedicated to AI supercomputers, leading to collateral damage that ripples through every layer of the digital economy.

Commodity DRAM: Starved, Pricey, and Scarce

The consequences are stark and undeniable, reflected in collapsing inventory and skyrocketing prices. Global DRAM inventory has plummeted to just 8 weeks of supply, a critical low compared to the historical average of 12+ weeks. Some reports indicate inventory levels for SK Hynix and Micron were as low as 2 weeks in late 2025. Cloud providers, the backbone of modern digital services, are reportedly receiving only 70% of their ordered DRAM volumes, forcing them to scramble for alternatives.

Meanwhile, the cost of standard memory has exploded. A 32GB DDR5 kit that cost $110 in early 2025 surged to $442 by late 2025—a staggering 400% increase. DDR4 prices jumped 158% and DDR5 surged 307% within three months of late 2025 demand shocks. Even 64GB DDR5 modules, priced at $205 in October 2025, now command $880. This isn't a temporary spike; the price rises are expected to continue well into 2026, with some analysts forecasting a further 70% increase in DDR5 prices. While there was a brief stabilization in DDR5 prices in Germany in early February 2026, some experts suggest this might be a temporary reprieve before further increases.

The underlying issue is simple: demand for commodity DRAM continues to grow from PCs, servers, and embedded systems, but the supply has been deliberately siphoned off for AI. This creates a market where basic memory components are scarce despite ongoing, widespread need.

DRAM Price Hikes: A Snapshot

Prices reflect reported market conditions and may vary.

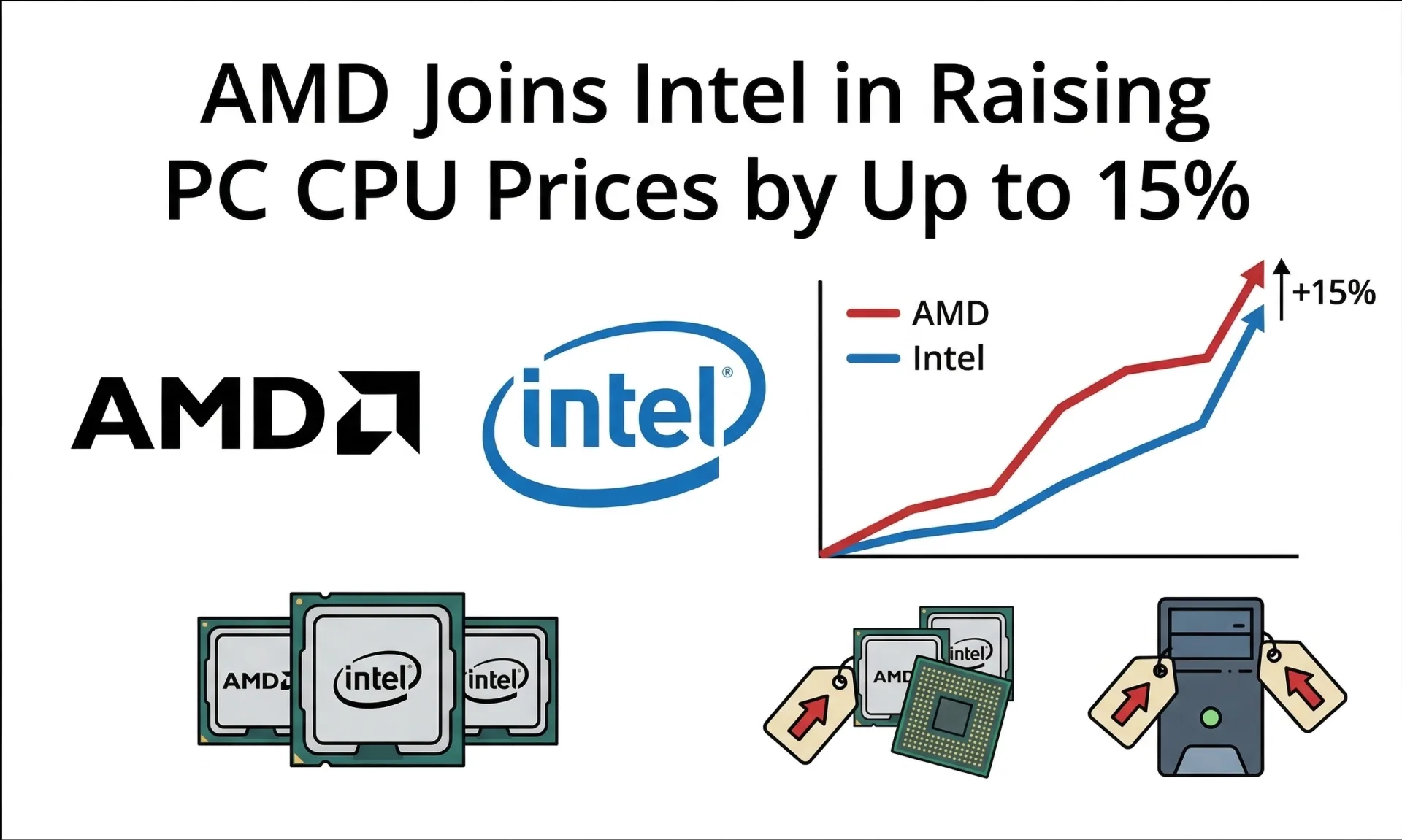

Ripple Effects: Beyond the Bill of Materials

The fallout from this strategic pivot extends far beyond just pricing. Micron, for example, discontinued its Crucial consumer brand by early 2026, a move we see as a clear declaration of their priority shift towards server-grade and HBM products for AI infrastructure. While Micron claims this move is to "help consumers around the world" by improving supply for larger customers, the reality for DIY PC builders and enthusiasts is significantly fewer options and higher costs. Samsung and SK Hynix are following suit, funneling resources to high-margin AI applications while commodity DRAM withers.

PC manufacturers are not immune; Dell, HP, and Lenovo have already implemented 15–20% price hikes for 2026 systems. These increases are trickling down to the consumer, making even basic PC upgrades prohibitively expensive. Cloud infrastructure costs are projected to rise 5–10% between April–September 2026 due to memory constraints, with some cloud providers expecting these increases to become a new, permanent baseline. This will undoubtedly impact businesses reliant on cloud services, with memory-intensive services facing steeper increases.

But the most dramatic illustration of AI’s memory hunger lies in enterprise AI deployments. A single NVIDIA GB300 rack consumes 37TB of fast memory—comprising approximately 20TB of HBM (high-bandwidth GPU memory) and 17TB of LPDDR5X system memory.—a volume equivalent to over a million consumer laptops. This isn't just a technical detail; it starkly illustrates how AI is consuming resources that could otherwise support broader technological innovation, potentially leading to a "technological mass extinction event" for certain electronics categories. We question whether the broader tech ecosystem can truly thrive when such a disproportionate share of a fundamental resource is diverted to a single, albeit powerful, sector. The impact is already hitting other sectors, with reports suggesting that the memory shortage could even delay NVIDIA's next-generation gaming GPUs.

The Long Wait for New Capacity

For those holding out hope for a quick resolution, the timelines for new capacity offer little comfort. Building semiconductor fabs is a monumental undertaking, requiring billions of dollars and years of work. Micron's $10B Japan fab won't operate until late 2028. SK Hynix's U.S. projects aren't expected to ramp until 2027 at the earliest. And all new fabs require 2–3 years and $10–20B in capital, with construction in the U.S. taking significantly longer (around 38 months) and costing twice as much as in Taiwan.

This means meaningful new supply won’t arrive for years, even as AI demand continues its relentless growth. Micron itself has warned that the DRAM drought could last until at least 2028. This structural mismatch won’t fix itself overnight, and we believe it's unrealistic to expect a return to pre-AI pricing or availability anytime soon. The industry’s focus on HBM means that while overall DRAM production might grow (projected 24% YoY in 2026), commodity DRAM will continue to be starved of capacity.

Following the Money: A Hard Truth

The core issue, in our view, isn't a lack of innovation or effort, but a fundamental misalignment between where capital flows and the needs of the broader tech industry. The market is indeed "following the money," and the money is undeniably in AI. This is rational market behavior for memory manufacturers, as evident in the record operating margins reported by companies like SK Hynix (58%) in Q4 2025, a dramatic turnaround from negative margins in 2023. The "Hyper-Bull" phase of the memory market has seen supplier leverage reach an all-time high, driven by AI and server demand.

But the collateral damage—soaring prices for everyday devices, constrained cloud infrastructure, stalled enterprise AI deployments, and a frustrated consumer base—reveals how fragile our supply chains have become in the AI era. We anticipate that memory efficiency will become paramount in hardware design, driving innovations in compression and architecture. However, the hard truth remains: the AI boom has reshaped memory markets in ways that won’t benefit everyone equally, and for the foreseeable future, the rest of us are left to grapple with the consequences of AI’s insatiable hunger.

Comments