You don't need an expensive GPU to run a local LLM that actually works

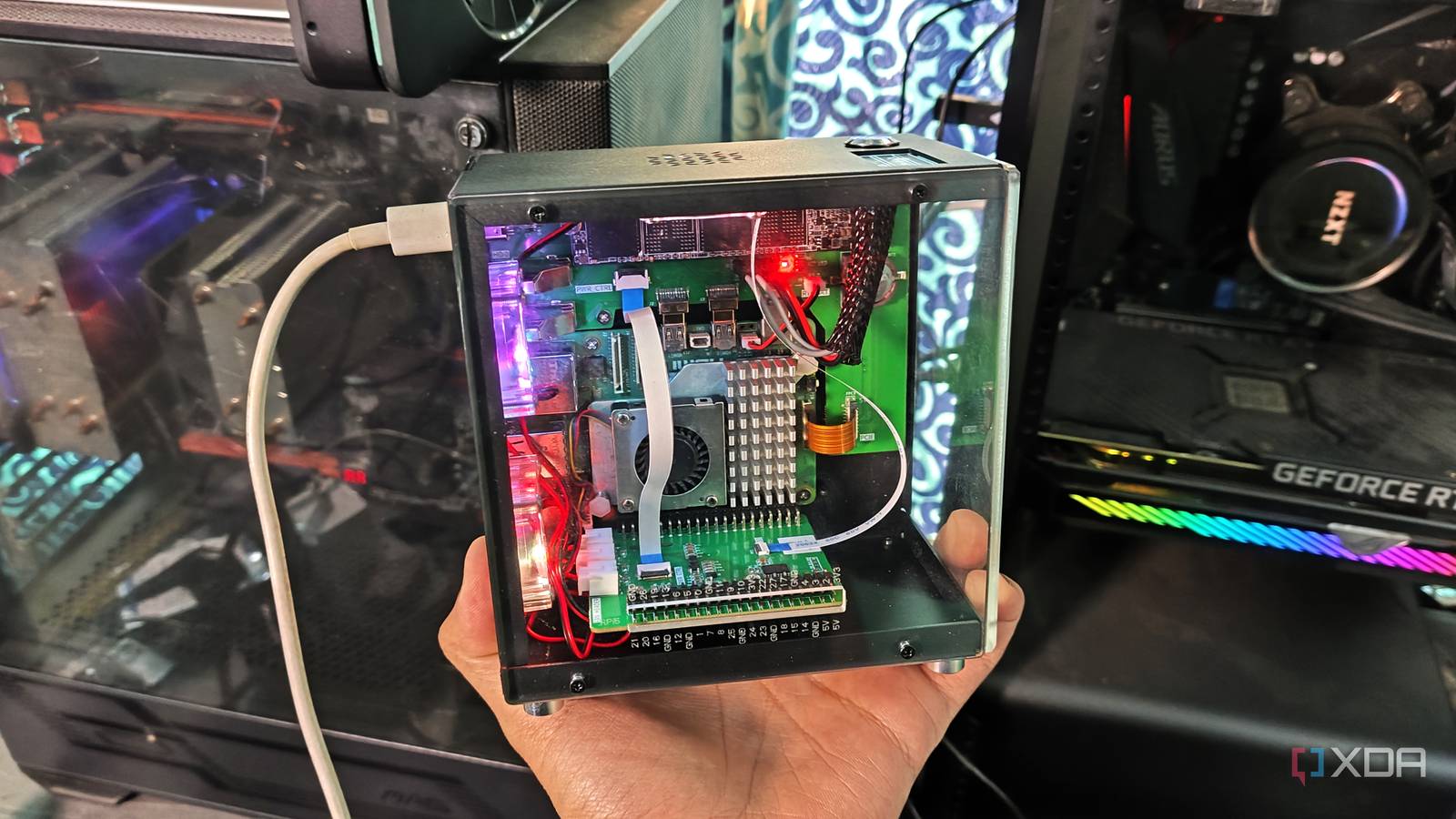

…Related I built a local LLM server I can access from anywhere, and it uses a Raspberry Pi It may not replace ChatGPT, but it's good enough for edge projects Picking…

…Related I built a local LLM server I can access from anywhere, and it uses a Raspberry Pi It may not replace ChatGPT, but it's good enough for edge projects Picking…